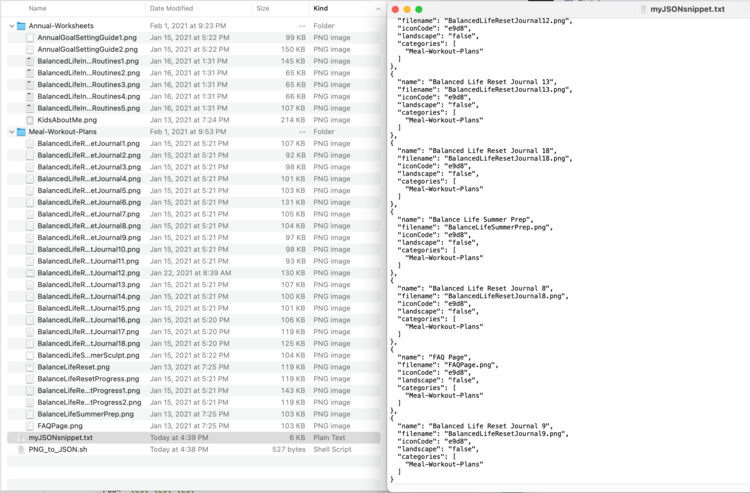

Actually, I should clarify – I have a love-hate relationship with structured logging. On one hand, dumping everything as JSON is the only sane way to handle logs in a distributed system. You need that machine-readable context when you’re querying Datadog or Loki later. But locally? On my machine? It’s a nightmare.

If you’ve ever tried to debug a complex object state by staring at a raw JSON blob scrolling past at 60 miles per hour in your terminal, you know exactly what I mean. It’s unreadable. So we use pretty-printers. We pipe to pino-pretty or jq. And for a while, that’s fine.

Until it isn’t.

Last Tuesday, I was debugging a race condition in our payment reconciliation service. I’m running Node.js 24.2.0 locally, trying to catch a specific state mutation. The object I needed to inspect was deep—maybe six levels of nesting, mixed with arrays of transaction IDs. But every time the log hit the terminal, pino-pretty expanded it into 400 lines of colored text. I spent more time scrolling up to find the start of the object than I did actually fixing the bug.

The Terminal is the Wrong Tool for Deep Objects

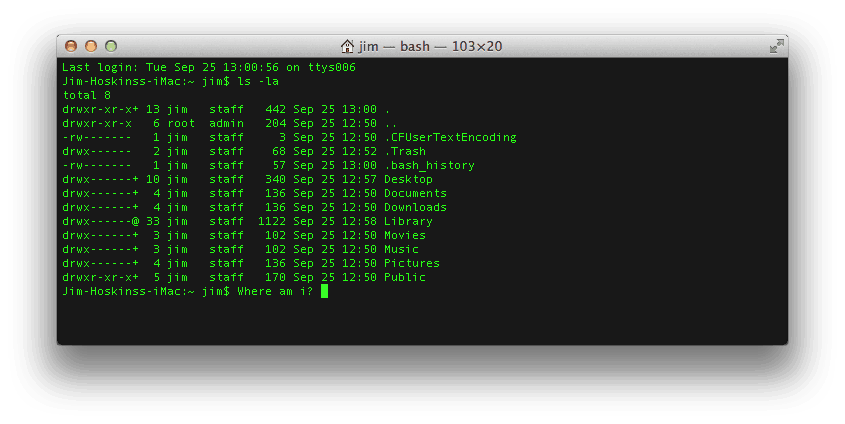

The terminal is great for text. But it is terrible for data structures. When we force structured data into a linear stream of text, we lose the ability to interact with it. You can’t collapse a property you don’t care about. You can’t filter out the noise without restarting the process with a complex grep chain.

I realized I was trying to solve a UI problem with CLI tools. And that’s when I stopped trying to make my terminal do things it wasn’t built for. Instead, I moved my local debugging workflow to the browser.

The concept is simple: instead of piping logs to stdout, you pipe them to a local web server that broadcasts them to a lightweight frontend. It sounds like over-engineering, but the friction reduction is absurd. You get collapsible trees, instant search, and log levels that actually work as filters, not just colored text.

Building a “Local Kibana” in 50 Lines

You don’t need a heavy SaaS integration for this. I hacked together a quick viewer using a simple WebSocket server. The idea is to keep the data local—no sending sensitive dev logs to the cloud—but get the UX of a full observability platform.

// viewer-server.js

// Run this, then pipe your app: node app.js | node viewer-server.js

import { WebSocketServer } from 'ws';

import http from 'http';

import fs from 'fs';

import split2 from 'split2';

const server = http.createServer((req, res) => {

// Serve a basic HTML file with a JSON tree viewer

res.writeHead(200, { 'Content-Type': 'text/html' });

res.end(fs.readFileSync('./index.html'));

});

const wss = new WebSocketServer({ server });

// Handle incoming logs from stdin

process.stdin.pipe(split2()).on('data', (line) => {

try {

const log = JSON.parse(line);

// Broadcast to all connected browser tabs

wss.clients.forEach(client => {

if (client.readyState === 1) {

client.send(JSON.stringify(log));

}

});

} catch (err) {

// Ignore non-JSON lines (like nodemon restart messages)

}

});

server.listen(3000, () => {

console.log('Log viewer running at http://localhost:3000');

});On the frontend, I just used a simple React component to render the incoming stream. And the difference was immediate. Instead of a wall of text, I had a clean list. I could click to expand the transactionContext object only when I needed it. The rest of the noise stayed collapsed.

Why This Beats jq Every Time

I know some of you are probably thinking “Just use jq!” right now. And I hear you – I love jq. It’s powerful. But jq is a query language, not an explorer. To use jq effectively, you need to know what you are looking for beforehand.

Debugging is often about exploring the unknown. You don’t know that the user_id is null until you see it sitting next to a valid session_token. And in a browser-based viewer, you spot that visual anomaly instantly. But in the terminal, it’s just line 4,203 of 10,000.

Performance: The Hidden Win

Here is the part that actually surprised me. I assumed piping logs to a browser via WebSockets would be slower than printing to the terminal. But I was wrong.

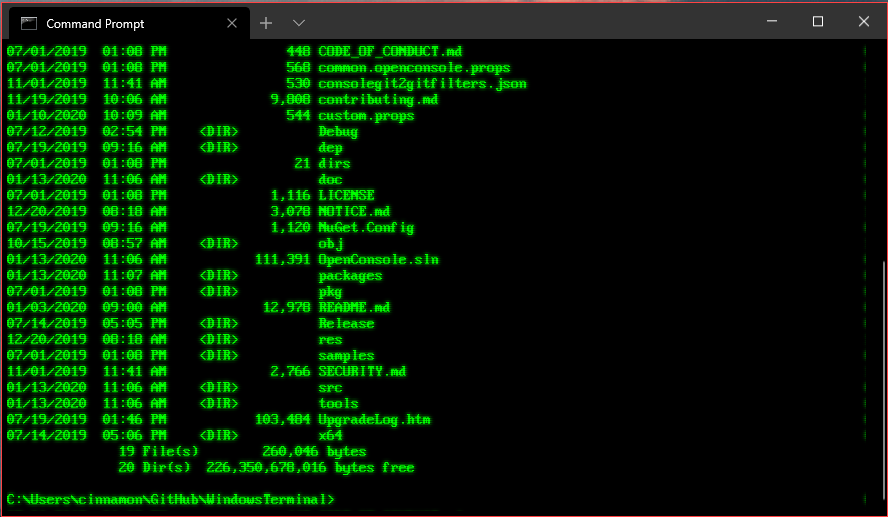

Terminals are surprisingly slow at rendering massive amounts of text. If you’ve ever crashed iTerm2 or VS Code’s integrated terminal by accidentally logging a 5MB JSON object, you know the pain. Browsers, on the other hand, are highly optimized for DOM manipulation (if you handle the list virtualization correctly).

I ran a quick benchmark comparing the two workflows while load-testing my API. And the results were clear – the browser-based viewer handled 5,000 logs/second without breaking a sweat, while the terminal started lagging noticeably after just 2,000 logs/second.

The Ecosystem is Finally Catching Up

We are seeing more tools pop up that embrace this “browser-first” local logging mentality. And it makes sense – we build web apps, so why are we debugging them with tools from the 1980s? The ability to drop a filter like level >= 40 && msg.includes("Auth") into a GUI input box is infinitely faster than remembering the grep syntax for the fiftieth time today.

So if you’re still squinting at colored text in a black box, do yourself a favor. Look for a web-based log viewer, or build a simple one like I did. Your eyes will thank you.