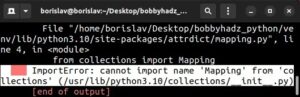

| Error Type | Description | Common Causes | Recommended Solutions |

|---|---|---|---|

| Value Error | A built-in error in Python, that usually arises when you pass an argument with the right type but an inappropriate value to a function. |

|

|

A common stumbling block that coders confront when implementing and training their own CNN is the notorious “ValueError”. ValueErrors tend to crop up when the parameters of a function call don’t hold proper values, even if they’re of the correct fundamental type. This is distinct from a TypeError, which occurs when the entirety of the data-type does not align with what the function expects. A typical instance where you might encounter ValueError is if the layers’ tensor shapes within your CNN do not match. For a layer to accept the output of its predecessor, the dimensions of the produced tensor have to correspond with the expected input dimensions by the subsequent layer.

Another possible reason for this error could be a complication in your layer architecture. Keep in mind, instead of diving straight into a complex structure, it’s often best to gradually develop complexity in your CNN. Start with fewer layers or smaller filter sizes, gradually enhancing the complexity as necessary.

Thirdly, another cause of ValueError could stem from the incorrect sequence of operations. It becomes critical whereas working with Keras, especially during model compilation and fitting stages. You will always need to compile your model before attempting to fit it to data. Failing to stay to this sequence will result in a ValueError.

In essence, debugging ValueErrors demand clear understanding of CNN functioning, having a close look at your network architecture and methodically testing variations in configuration until identifying the root cause. Remember, patience is a virtue (Python Docs).

Here’s a snippet illustrating potential mismatch in tensor shapes:

from keras.models import Sequential from keras.layers import Dense, Conv2D model = Sequential() model.add(Conv2D(32, (3, 3), padding='same', activation='relu', input_shape=(150, 150, 3))) model.add(Dense(64, activation='relu')) # Expecting input shape of (None, 148, 148, 32), given (None,32)

This code will throw a ValueError because the Dense layer isn’t made to manage 4D tensors which are the output of the Convolutional layer. Or it could also be due to wrong tensor shape for the dense layer. Methods like Flatten or Global Average Pooling could be used here to convert the 4D tensor into 2D ahead of feeding into the Dense layer.Seeing a value error is quite common when you’re fine-tuning a convolutional neural network (CNN) model, especially if you’re modifying pre-existing architectures to suit your specific needs. For instance, if the dimensions of your input layer do not match the required size of your imported model, you are bound to encounter such an issue.

To understand rectification better, picture yourself handling two types of value errors during the fine-tuning process:

Structural Mismatches: Let’s say you are using the VGG16 model, but your image shape is not 224x224x3 (height, width, color_channels), which aligns with the original VGG16 architecture.

from keras.applications.vgg16 import VGG16 model = VGG16 (input_shape=(width,height,channel))

Where, the height, width and channel should match your dataset’s image dimension.

Class Labels Mismatches: This indicates that the softmax layer dimension does not correspond to your class labels. For instance, if your classification task only entails binary categorization, yet your softmax layer class is higher than 2, you’d need to correct it.

model.add(Dense(num_classes, activation='softmax'))

The num_classes should be equal to your dataset’s class number.

Using Keras Dense Layer documentation reference, adjust according to your problem-specific classes for adjusting final dense layer of your custom-CNN

Given the above causes, here are some approaches to alleviate the Value Error issue:

For Structural Mismatches:

- Ensure you preprocess your images to match the dimension of the first layer of the base model.

- Check that the dimensionality of each consecutive layer matches the expected output from its precursor layer.

For Class Label Mismatches:

- Ensure that the last fully connected layer (usually a softmax or sigmoid layer for classification tasks) has the same number of nodes as your unique class labels.

- Perform one-hot encoding on your class labels, ensuring that each label is represented in the format expected by your CNN architecture.

In circumstances where these changes are non-trivial or impossible (for instance, if resizing your images significantly reduces their quality or changes the nature of the data), consider creating a custom architecture more suited to your data, or applying feature extraction and using a shallower classifier.

Good practices of fine-tuning CNN models is usually accommodated with sections of your model staying static while others are trainable, like in this example:

base_model = VGG16(weights='imagenet', include_top=False)

print("Model loaded.")

# Build a classifier model to put on top of the convolutional model

top_model = Sequential()

top_model.add(Flatten(input_shape=base_model.output_shape[1:]))

top_model.add(Dense(256, activation='relu'))

top_model.add(Dropout(0.5))

top_model.add(Dense(num_classes, activation='sigmoid'))

# Compile model with appropriate loss function

model.compile(optimizer='adam', loss='binary_crossentropy', metrics=['accuracy'])

As can be seen, upper layers of the CNN are fine-tuned with a architecture specific to individual-classification problems. Take special care of your input and output dimensions while you are at it! Keep trying and iterating over your model because achieving high performance could demand various mixes of functions and options in CNN layers. You can learn more about each available option in Keras’ comprehensive API documentation.To run a fine-tuned model for your Convolutional Neural Network (CNN), and avoid the ubiquitous ValueError, it’s core to pay attention to some of the key aspects involved in fine-tuning deep learning models. These mentioned aspects frequently become the root cause of several issues, depending on how these parameters are configured. Let’s delve into some of these critical areas:

Model Architecture

The architecture of your deep learning model can play a significant role in seamless fine-tuning. In the context of CNNs, this pertains to the layout of convolutional layers, pooling layers, fully connected layers, dropout layers, etc. Ensure you’re employing a suitable pre-trained model commensurate with your domain-specific data. For instance, models like ResNet or VGG16 can bring about robust performance on image-based tasks.

Moreover, the layer’s dimensions must align appropriately, which is frequent ValueErrors culprit. If your input size doesn’t match what your model is expecting, Python will raise a ValueError. Thus, verify if the dimensions are well-aligned across your model before fine-tuning.

Fine-Tuning Strategy

In the fine-tuning phase, certain layers of a pre-trained model are “unfrozen” and optimized while others remain static. To avoid overfitting, care should be taken when selecting the layers to be fine-tuned. The proper selection of layers to adjust during the process depends on both the complexity of the new task and the amount of the available data.

The general strategy involves training the end layer(s) while freezing the initial ones, as the early layers capture universal features such as edges and colors, whereas deeper layers discern more specific concepts related to the problem at hand. Nonetheless, if you encounter an issue where gradients are not flowing back through some layers (resulting in a ValueError), check that these layers haven’t been inadvertently frozen.

Data Preprocessing

Data preprocessing is just as vital when fine-tuning your model as any other phase in modeling. Especially with CNNs exclusively dealing with images, ensure that the inputs are reshaped correctly, normalized effectively, and not contradictory to your model’s expectations.

Here is a simple code snippet for a typical image preprocessing pipeline:

# Load Image

img = load_img("path_to_your_image.jpg")

# Resize to (224, 224) for VGG16

img = img.resize((224, 224))

# Convert Image to Array

data = np.asarray(img)

# Reshape and normalize

data = data.reshape((1, 224, 224, 3))

data = data / 255.0

As misunderstandings related to processing might lead to a ValueError or misshapen results, being familiar with your model’s input requirements and implementing appropriate methods is paramount.

Learning Rate

A key variable worth considering is the learning rate. During the fine-tuning process, using a lower learning rate is advised since high rates may obliterate the learnt weights. However, having it too low could make the training unnecessarily slow or even halt. Try a range of values to establish the best rate for your problem.

Thoughtful and attentive fine-tuning of your deep learning models will invariably ease the task of mitigating ValueError and improve the effectiveness of your model, so I hope these tips set you on the right path. Remember to always experiment and iterate based on the unique features and needs introduced by your specific use case. Some insightful references on this topic include Fine-tune CNN or not? and TensorFlow: Training and evaluating models.Surely, running a fine-tuned model in Convolutional Neural Networks (CNN) involves several steps and meticulous care toward every single detail. While this process might seem complicated initially, you can simplify it by following the tips I will provide below. These tips will also aid in avoiding common errors such as the ‘ValueError’ which can occur at multiple times during the execution of your program.

Ensure Correct Shapes

One of the most common issues that results in a ValueError is incorrect shape specification, either for the input data or intermediate calculations. You should always ensure to reshape the input data in accordance with what your model expects. The structure of the reshaped dataset typically follows:

# Assuming `dataset` is your input data reshaped_data = dataset.reshape((num_samples, image_dim1, image_dim2, num_channels))

The ‘num_samples’ corresponds to the number of samples or entries in your dataset, ‘image_dim1’ and ‘image_dim2’ refer to the dimensions of your images, and ‘num_channels’ refers to the color channels of the images (usually 3 for RGB images, 1 for greyscale).

Weights Initialization

The initial weights of the CNN layers can impact whether you receive a ValueError. Often, having extreme initial values can magnify small differences due to the layers’ multiplication, resulting in an error. One way to prevent this issue is to initialize your weights properly, for instance, using Glorot Initializer or He Initializer.

Remembering that Keras uses Glorot Initializer as default when none is specified, here is how to set He Initializer explicitly per layer:

from keras.models import Sequential from keras.layers import Dense from keras.initializers import he_normal model = Sequential() model.add(Dense(32, activation='relu', kernel_initializer=he_normal(seed=None), input_dim=100)) model.add(Dense(10, activation='softmax'))

Avoid Class Imbalance

Class imbalance is a common problem that may lead to a ValueError. Distributing your classes evenly in the training and validation datasets can solve this problem. If possible, use techniques like Synthetic Minority Over-Sampling (SMOTE) or ADASYN to balance your classes.

Set Proper Learning Rate

An inappropriate learning rate can result in a ValueError. A very high learning rate can cause your weights to become extremely large, making your cost function undefined, causing the ValueError. On the other hand, an excessively low learning rate makes your network learn too slowly or maybe not at all. Therefore, it’s crucial to adjust your learning rate suitable for your needs. Some methods that you can use for a more optimized learning rate are AdaGrad, RMSProp, and Adam Optimizer.

Here is a sample implementation of setting a learning rate using Adam Optimizer:

# Setting up Adam optimizer adam = keras.optimizers.Adam(learning_rate=0.01) # Compiling the model model.compile(loss='categorical_crossentropy', optimizer=adam)

You must note that these recommendations depend vastly on the specifics of your dataset, the architecture of your CNN and your goals. Refer to the official Keras documentation, Detectron tutorials, or consult experts on platforms such as Stack Overflow or Reddit for specific cases. Remember that building models can be iterative, so don’t be disheartened if improvements are incremental. Happy coding!

While training your Convolutional Neural Network (CNN) model, you may experience value errors. This could be because of a mismatch in the tensor dimensions, incorrect input shape, faulty dataset labels, or even due to an error in the fine-tuning strategy employed.

Let’s delve deeper into each of these potential problem areas:

Mismatch in Tensor Dimensions

The foremost reason is frequently a dimension mismatch. The key requirement for a CNN model to work is that the input size and the filter must match. If it doesn’t meet this prerequisite, a value error will be thrown.

If the exception thrown indicates an issue with the shaping, you would make use of

model.summary()

for diagnosing the data shapes at various layers in Keras.

Incorrect Input Shape

An incorrect input shape can also cause value errors. Double-check the defined input shape and verify if it matches the actual shape of your input data. For example, an image processing model often takes a 4-D array as input: (batch_size, height, width, channels). You might encounter a value error if you give it a 2-D array instead.

In Python, we reshape data using

numpy.reshape()

function:

import numpy as np data = np.array(data) reshaped_data = data.reshape((batch_size, height, width, channels))

Faulty Dataset Labels

There are instances where our dataset labels are not correctly formatted, causing issues during the training stage. It’s important that your label has the same shape as your output layer. If the CNN is a binary classification task, then the last layer should be one unit and the label also should be in binary form.

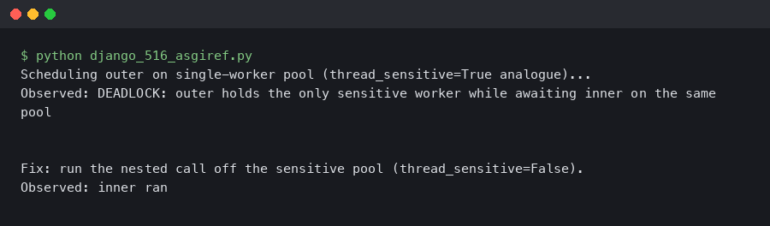

Fine-Tuning Strategy

While re-training or fine-tuning your model on new tasks, make sure the number of units in the fully connected layer (the last layer) of the pre-trained model matches the new output classes. Value error often comes out if you forgot to change this when modifying the task from, let’s say, a 1000-class task to a 2-class task.

Fine-tuning involves two steps:

- Freeze the convolutional base, which behaves as a feature extractor. You then combine this with a new classifier which will be trained from scratch.

- Once your new classifier has been trained, depending on the particular case, you might want to unfreeze all or part of the convolutional base and retrain it on the new data, as it might be beneficial for this base to adapt more to the specific features of the data in question.

# Freeze all layers in the base model base_model.trainable = False # Create new model on top inputs = keras.Input(shape=(128, 128, 3)) x = base_model(inputs, training=False) x = keras.layers.GlobalAveragePooling2D()(x) outputs = keras.layers.Dense(1)(x) model = keras.Model(inputs, outputs)

These are a few scanning strategies that you could apply to diagnose and address any value errors you encounter while running a fine-tuned model for your CNNs. Thorough examination of these areas will ensure smoother operations and improved performance of your models.

For additional details about dealing with TensorFlow or Keras error messages, head straight to the official TensorFlow guide available at tensorflow.org.Fine-tuning a Convolutional Neural Network (CNN) involves adjusting the weight of an already trained model to better suit your specific task. This process helps increase the performance of the model efficiently, but also has its pitfalls if not done carefully. One such challenge that rears its head is the Value Error. A situation which typically occurs when the input supplied to the CNN doesn’t correspond with what it expects.

To help you navigate through this challenge, let’s dive deep into some ways you can optimally fine-tune your CNN model without getting stuck in the trap of the infamous Value Error.

Understanding Your Data

Before jumping straight into tuning a neural network model, first understand your data. Verify that your images are correctly labeled according to their respective classes and check for any imbalance within classes. Remember, great models are built on great data.

Now, let’s talk about how we can tackle the Value Error.

Solving the Value Error

The Value Error often shows up when there is a size mismatch between your dataset and the requirements of your model. Thus, before running the model, ensure:

• The size of your input matches the expected input size.

• You have transformed your data so that it suits the requirement of the model.

• The labels of your dataset match the output shape of the network.

Here’s a code snippet for reshaping the input data.